Following on nicely from Paul’s discussion of direct-to-brain broadband – and Robert Koslover’s comment – here we have news of the first read-write brain electrode from a company called IMEC:

Following on nicely from Paul’s discussion of direct-to-brain broadband – and Robert Koslover’s comment – here we have news of the first read-write brain electrode from a company called IMEC:

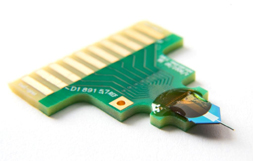

Today’s deep-brain stimulation probes use millimeter-size electrodes. These stimulate, in a highly unfocused way, a large area of the brain and have significant unwanted side effects.

…

IMEC’s design and modeling strategy allows developing advanced brain implants consisting of multiple electrodes enabling simultaneous stimulation and recording. This strategy was used to create prototype probes with 10 micrometer-size electrodes and various electrode topologies.

…

These new design approaches open up possibilities for more effective stimulation with less side effects, reduced energy consumption due to focusing the stimulation current on the desired brain target, and closed-loop control adapting the stimulation based on the recorded effect.

Presumably the avenue towards the development of devices for direct-to-brain broadband will be through the development of ever more sophisticated products of this kind, possibly travelling via wirehead-style ecstasy generators.

[from this press release from IMEC, via Technovelgy][image from IMEC press release]

This speculative futurism thing is starting to spread! Keir Thomas, Linux columnist for PC World, has posted

This speculative futurism thing is starting to spread! Keir Thomas, Linux columnist for PC World, has posted  Most attempts to simulate the function of organic brains using computers have been software simulations – models built with code, if you like. An international team of computer scientists have been trying the other approach, however:

Most attempts to simulate the function of organic brains using computers have been software simulations – models built with code, if you like. An international team of computer scientists have been trying the other approach, however: